As a software engineer, I regularly use AI and tools like Copilot in my work, all well within our company’s privacy policy. In some ways, these tools genuinely boost my productivity, but they also stir up constant questions: Will automation make my job redundant? Will companies rush toward AI and leave essential manual skills behind?

Yet, after years spent learning in public through this blog, I learnt to pay more attention to the past to better understand the future. History, as journalist Norman Cousins reminds us, is a vast early warning system, not repeating itself, but a constant rhyme of old problems resurfacing in new forms. Innovations in one field often echo discoveries from others, and as such, insights from those industries are the warnings we should heed most closely.

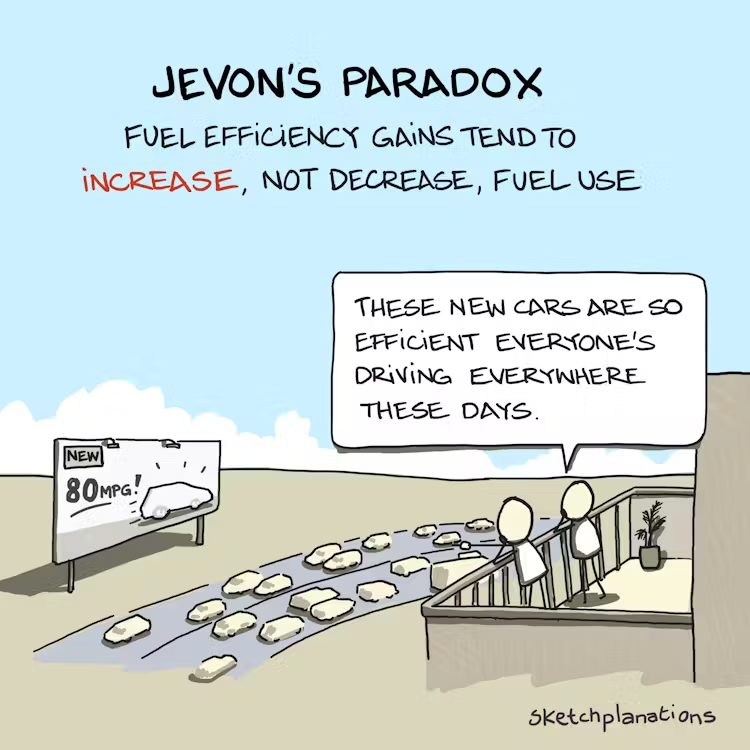

Firstly, let’s talk about the Jevons Paradox. In 1865, the English economist William Stanley Jevons observed that, paradoxically, making coal usage more efficient for steam engines led to increased consumption of coal, not less. This phenomenon, now known as the Jevons Paradox, recurs when efficiency improvements make a resource more accessible, leading to a surge in demand and an increase in total consumption.

For example, improving road network efficiency leads to more driving, not less congestion. Building a reservoir leads to more water consumption, not less water scarcity. Improving efficiency leads to more data centres, not less energy usage. The more functionality a tech company releases, the more feedback it gets to add even more functionality. This paradox doesn’t always occur, but it seems especially common where demand hasn’t yet been saturated.

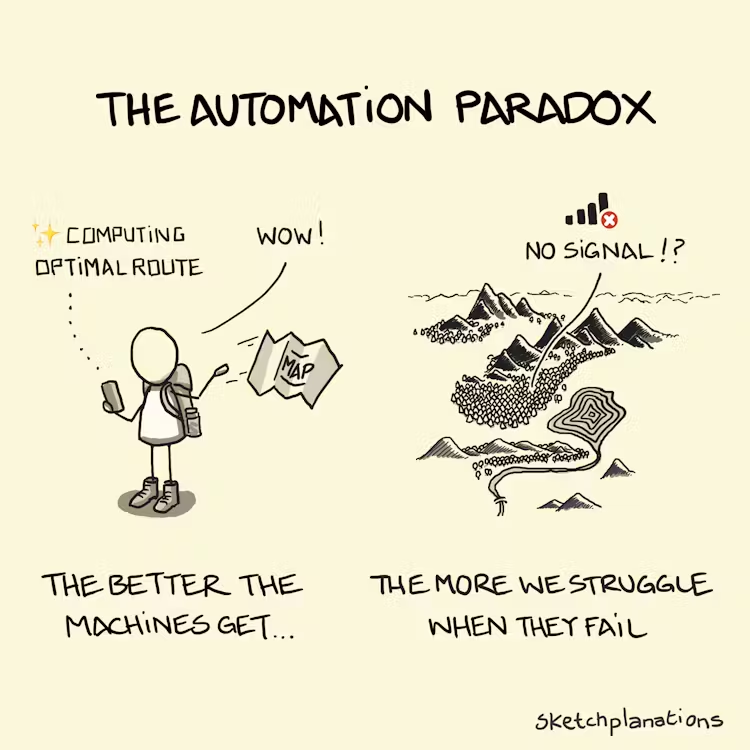

But this means we are becoming more and more dependent on technology. Once we get used to AI, it is hard to get back. This brings us to the Automation Paradox. The better and smarter our tools get, the more lost we find ourselves when they break down or behave unexpectedly. We use Copilot day in and day out, and we might forget to write a basic piece of code.

We can take a lesson or two from the aviation industry, as they had to deal with the Automation Paradox much, much earlier than our software engineering industry.

A 14-time Top Gun and master of both fighter jets and commercial flight decks, Warren “Van” Vanderburgh was renowned for his calm expertise and relentless commitment to safety training. He also gave a terrific presentation in 1997 about the “children of the magenta line,” a phrase he used to describe pilots’ growing over-reliance on automated systems, which can and does lead to aviation accidents and hundreds of deaths.

Vanderburgh and his team carefully examined numerous accidents, incidents, and violations and found that a striking 68% were linked to poor cockpit automation management.

They realised that many pilots flying highly automated jets were becoming what Vanderburgh called “Children of the Magenta Line”.

But you see, we have become what I call children of the magenta. [Note: In modern cockpits, the magenta line is the brightly colored route shown on the flight display, a digital path generated by the navigation computer.] You know, we think we have to have those magenta lines on the map and that magenta V-bar that’s steering us toward that line, or for some reason, the plane won’t fly.

Vanderburgh broke automation down into three levels: first, manual flight (actually hand-flying the plane); second, basic autopilot guidance, where pilots use modes like altitude hold, vertical speed or heading select for parts of the flight; and third, full automation, where the Flight Management System (FMS) essentially flies the plane for extended periods.

Vanderburgh’s central lesson was about judgment, knowing which level of automation fits the moment. He urged pilots not to default to the highest setting just because they could. When things get complicated or start to go wrong, the safest move is often to step down a level: switch from full automation to autopilot guidance, or even from autopilot to hand-flying.

As he said, the risk is not in using FMS, but in assuming the airplane can’t fly without these tools, or that the technology always knows best. Sometimes, the right call is to step back from automation and actively fly the plane, magenta line or not.

Unfortunately, there is no shortage of real-world evidence of the fatal consequences of excessive reliance on automation. Vanderburgh used as an example a tragic aviation accident in Romania, which, as I am Romanian, hit me to the core: the TAROM tragedy in Balotesti, in 1995, where 60 people dies. This tragedy was discussed, as I remember, for years in the Romanian media. In this accident, an auto throttle malfunction led to disaster when the copilot didn’t hold the throttles by hand. Something simple, but deadly, if automation falters.

Vanderburgh argued that aviation needs a cultural shift, away from the automatic urge to operate at the highest level of automation at all times.

To that end, we’ve determined that we have to change the culture that drives us to attempt to operate at the highest levels [of automation] at all times. We created this culture. I mean, the whole industry created this culture, and it needs to be changed.

He remembered:

When I started 25 years ago with American Airlines, I used to hear the same advice in recurrent training: Fly the plane first. And we did—we flew the plane first.” But as cockpits grew more complex and automation became the norm, the conversation shifted to managing systems: more buttons, more screens, more reliance on the autopilot and flight computers. It became common to treat pilots as automation managers rather than as aviators.

That change wasn’t harmless.

“The accident history of the first six years of the ’90s clearly shows automation managers plugging themselves into the ground all over this planet,” Vanderburgh warned.

That’s why his core message is:

We are not automation managers. We are captains and pilots, and by our aviator skills, we are to ensure the vertical and lateral path of these planes at all times. We will use the wonderful tools of automation that have been provided to us to help us with that task. But when the automation is not maintaining the intended flight path, we will turn it off and maintain the path by our skills.

Automation excels in routine situations, but it can’t improvise when things go wrong. In Vanderburgh’s words:

Automation lacks the ability to create flexible responses to unanticipated changes in flight path requirements. So in these circumstances, a lower level of automation should lower workload and thereby preclude us from becoming task-saturated and losing our situation awareness.

How, then, can this culture of automation dependency be resisted in practice? There are indeed some simple, practical steps.

- Pilots must remain tactilely connected to the aircraft, even with autopilot engaged.

- “… we’re maneuvering, we’re changing configurations, we got power settings going back and forth. The pilot flying, even though the autopilot is on, has got to remain tactilely connected to this plane and mentally flying it, so they will quickly recognise any deviation from acceptable performance parameters or intended flight path.”

- Disconnect automation when it fails to maintain the intended flight path or when manual control is safer.

- Practice manual flying to maintain proficiency and confidence. Automation is a tool, not a replacement for piloting skills.

Also, an Air Facts Journal article about Vanderburgh mentions a technique to mitigate automation dependency, eerily similar to software engineering rubber duck debugging.

“Be your own copilot. This is a very effective technique that is hard to get experienced pilots to follow. In airline operations, the pilot not flying is required to point his finger at the autopilot/flight director status display (scoreboard) to make sure that what is displayed is what was selected, then verbally state what he sees. Do this yourself, point at the scoreboard, and state out loud what you see. You will be surprised at how many times you will catch an error.”

Stepping down in automation—the real lesson for children of the magenta line

In his book Atomic Habits, James Clear describes the “pointing and calling” safety system, widely used on Japanese railways, that raises awareness and dramatically reduces errors. Conductors perform a ritual of pointing at signals, speedometers, and platform edges, while verbally announcing their status at each step. Clear notes that

pointing and calling is so effective because it raises the level of awareness from a nonconscious habit to a more conscious level. Because the train operators must use their eyes, hands, mouths and ears, they are more likely to notice problems before something goes wrong.

While automation can transform our workflows and lighten the load in routine situations, it’s not infallible, and it certainly isn’t a substitute for judgment, engagement, or hard-earned skills. The solution, whether in the cockpit or at the keyboard, isn’t to reject technology, but to cultivate a culture that respects both progress and practice, even or especially as the tools around us get smarter.

And just as we, in software engineering, are looking back at what happened in aviation in the 1990s, who knows what other industries will look back at ours and take our warnings to heart? After all, Linus Torvalds, of Linux and Git fame, is credited with one law of software engineering that states:

Given enough eyeballs, all bugs are shallow.

Or that, given enough context and a relevant audience, the fix to any problem will eventually be obvious to someone. But only if someone, somewhere, is indeed looking.

Related articles:

Balancing Innovation and Familiarity with the MAYA Design Principle